TLDR

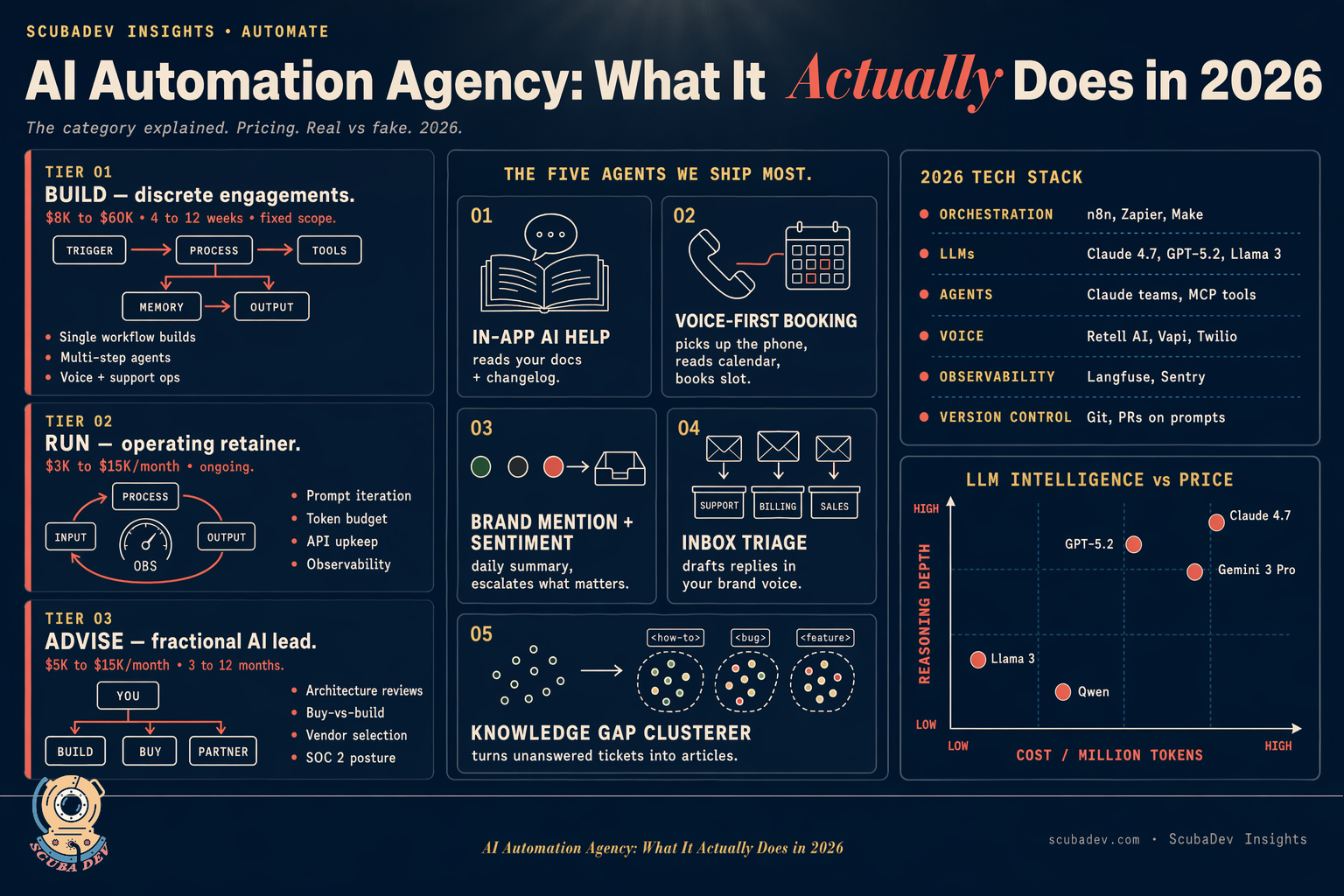

AI automation agencies design, build, and operate production AI workflows: LLM-powered agents, n8n pipelines, MCP tools, and multi-agent orchestrations. Pricing is $3,000 to $20,000 per month on retainer, or $8K to $60,000 one-time for a discrete build. The real ones use n8n, Claude, GPT, MCP servers, and write production code under human review. The fake ones chain Zapier Zaps and call it AI.

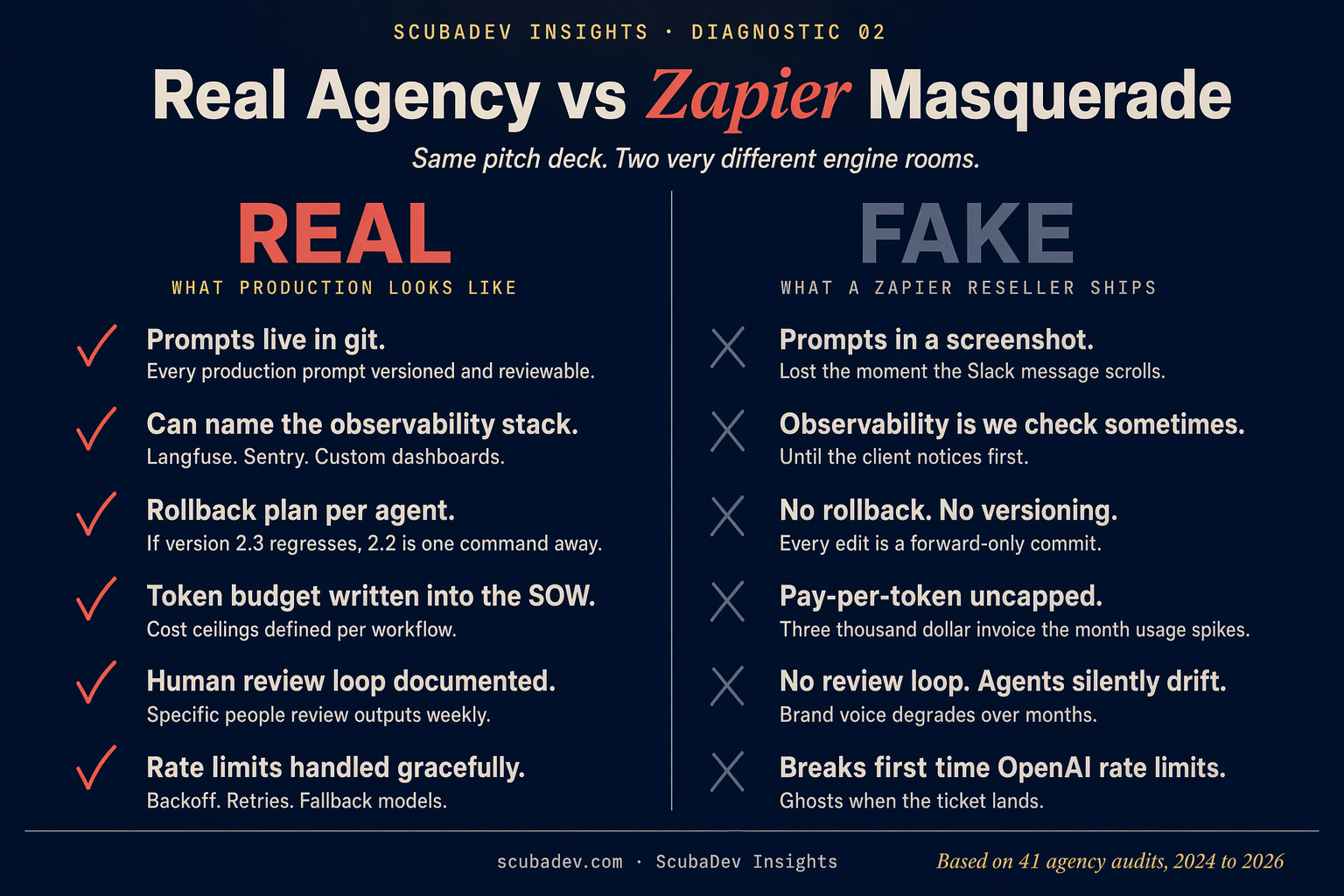

An AI automation agency ships working AI systems on a retainer. The good ones look like a small engineering team. The bad ones look like a Zapier reseller with a new logo. In 2026 the difference is easy to measure: real automation agencies ship agent workflows with observability, version control, and a rollback plan. The rest ship a Make.com scenario that falls over when the OpenAI rate limit trips. This post covers what the category is, what it costs, what to ask before signing, and the five agents we ship most often.

What an AI automation agency actually ships

The category name covers three distinct kinds of engagement. Treat them as separate services because the skills and pricing do not overlap.

Build. A fixed-scope AI system. Examples: an inbox triage agent that reads every new support message and drafts a reply in your brand voice, a voice-first booking agent that answers the phone and books appointments off the team calendar, a knowledge base gap analyzer that clusters unanswered tickets into missing articles. These are typically 4 to 10 week engagements priced $8K to $60K.

Run. An ongoing retainer to operate the systems the agency built. Includes monitoring, token-cost management, prompt iteration, integration upkeep when APIs deprecate, and adding new workflows as the client asks. Pricing is $3K to $15K per month. This is the part most “AI automation agencies” skip and why their builds fall over in month three.

Advise. An AI implementation consultant role. Architecture reviews, build-buy-partner decisions on AI features, vendor selection across the LLM stack (Anthropic, OpenAI, Google, Meta), and sometimes SOC 2 and security posture for AI systems. Priced like fractional engineering, $5K to $15K per month.

A real AI automation agency can do all three. A Zapier reseller can do Build only, and only for simple automations.

The 2026 tech stack

This is the stack we run at ScubaDev and see across the handful of other operational plays in the space.

- Orchestration. n8n is the self-hosted default. Zapier and Make for lighter use cases. We use n8n for anything production, Zapier for quick internal glue.

- LLMs. Claude 4.7 via the Anthropic API for anything requiring reasoning or long context. GPT-5.2 for vision-heavy tasks. Open models (Llama, Qwen) for cost-sensitive workloads where we control the infra.

- Agent frameworks. We use Claude’s native agent teams pattern for multi-agent work, LangChain rarely, pure API for one-shot tasks. MCP tools when the agent needs to call into structured systems.

- Voice. Retell AI and Vapi for phone agents. Twilio for telephony underneath. This is the stack behind our voice-first booking agent pattern.

- Observability. Langfuse for prompt and token tracking. Sentry for errors. Custom dashboards in Next.js for client-facing visibility.

- Version control. Agents run in repos. Prompts live in repos. PRs get reviewed. The agencies without this discipline ship, then silently break, then ghost.

If an agency pitches you and cannot tell you what their observability stack is, they do not have one.

What it costs in 2026

Published and observed ranges for AI automation services:

| Engagement | Price | Timeline |

|---|---|---|

| Single workflow build (intake, routing, classification) | $5K to $15K | 2 to 4 weeks |

| Multi-step agent (voice, support, sales) | $15K to $60K | 4 to 12 weeks |

| Full automation retainer | $3K to $15K per month | Ongoing |

| AI implementation consulting | $5K to $15K per month | 3 to 12 months |

| Enterprise AI program (SOC 2 scope) | $30K to $100K per month | 6 to 24 months |

Cost drivers that actually matter. Token budget. Integration count. Observability requirements. Whether the agency is billing for their own cleanup. The AI Automation Agency model works when the agency amortizes workflow templates across clients, which is why the productized engineering play dominates this space.

The 5 most common agents we build

These are the workflows we ship most often across ScubaDev retainers. Every one maps to a live idea in our ideas library you can clone.

1. In-app AI help that reads your product docs

The in-app AI help blueprint is the most requested pattern by far. A chat widget inside your SaaS product. User asks a question. The agent answers from your product documentation, your help center, and your changelog. If the agent cannot answer, the message routes to support with context preserved. Token-cost is bounded because the agent only reads docs, not the whole web.

Why it ships fast: no new UI outside the widget, no new auth surface, and most product docs are already in a format an agent can ingest. We deploy the first version in 2 weeks and iterate on the unanswered-question log for a month.

2. Voice-first booking agent

The voice-first booking agent picks up the phone, asks what the caller needs, reads the team calendar, picks the right service and person, books the slot, and sends a confirmation text. This is what we built for Mermaid Phone. The pattern generalizes to any service business with calendar-driven booking: salons, med spas, clinics, home service, real estate showings.

Real implementation notes. Retell AI for the voice layer. Twilio for the phone number. Claude for the reasoning. GHL or Cal.com or Google Calendar for the booking surface, picked by what the client already runs. Latency budget under 1.5 seconds between caller speech and agent response, which is the line where callers stop noticing they are talking to a machine.

3. Brand mention agent with sentiment

The brand mention agent with sentiment watches the web and social for your brand, summarizes daily, and flags negative mentions for a human to respond to. The monitoring surface is the commoditized part. The value is the sentiment classification, the summary, and the routing logic that only escalates what matters.

A version of this shipped fast for a Laguna Foundry client with 6 consumer brands. What surprised us was how often the sentiment classifier caught things the founder would have missed in manual review. The flag-only-what-needs-a-human rule is the difference between a tool they use and a tool they turn off in month two.

4. Inbox triage agent

The inbox triage agent reads every new message in an inbox, drafts the reply in your voice from your past-sent-mail pattern, files routine replies, and surfaces the few messages that need you. This is the single highest-ROI agent for operators whose inbox is the bottleneck.

The pattern is under-delivered by most automation agencies because it requires real voice capture (not a generic system prompt) and careful filing rules. We use a two-stage classifier: a cheap model does first-pass categorization, a more capable model drafts the reply, and the system asks for approval on anything outside a known pattern.

5. Knowledge base gap analyzer

The knowledge base gap analyzer clusters unanswered support tickets into topics your documentation does not cover, and drafts the missing articles for approval. This closes the loop on Agent 1 above.

Why it is boring and valuable. No user-facing surface, no latency constraint, runs weekly. The output is a list of articles to publish and a trend line of repeat-question volume going down. We ship this alongside in-app AI help, and the pair cuts support load by 40 to 70 percent within 90 days in the deployments we can measure.

How to tell a real AI automation agency from a Zapier reseller

Six questions that separate the categories.

- What is in your repo. A real agency runs prompts, agent logic, and workflow definitions in git. Resellers run everything in the Zapier UI and have no version history.

- How do you do rollback. Real answer: branch, PR review, deploy to staging, promote. Reseller answer: “we make a copy of the Zap before changes.”

- Token cost projection for this build. Real agencies can model token spend per workflow to within 30 percent. Resellers cannot, and that is why the first token blowout burns the retainer.

- What is your on-call process. Real agencies have one. Pager duty, a runbook, a rotation. Resellers answer “we will fix it when you flag it.”

- Show me an error log triage workflow. This is a basic observability workflow every agency should run on itself.

- What did you build for a client last month that broke, and how did you fix it. Real agencies have an answer. This is a reverse-interview of their incident response.

FAQ

Close, not identical. An n8n agency is a subset. AI automation agencies cover agent frameworks, LLM APIs, voice, and custom code in addition to n8n. n8n is the workflow layer, not the whole job.

For simple glue, yes. For anything that needs memory, multi-step reasoning, voice, or cost discipline, no. The line is usually around 5 steps or any workflow that calls an LLM more than once per execution.

$3K to $15K per month for ongoing run-and-iterate work. The low end is a single workflow and routine iteration. The high end is 5 to 10 production workflows with observability and monthly additions.

Yes. The agencies that do well in this category serve 5 to 50 person service businesses and early-stage SaaS. Enterprise AI is a different category with different pricing.

Build is fixed-scope, shipped in weeks. Run is a monthly retainer to operate and iterate. Most real agencies require run after build, because unsupervised AI systems silently break.