TLDR

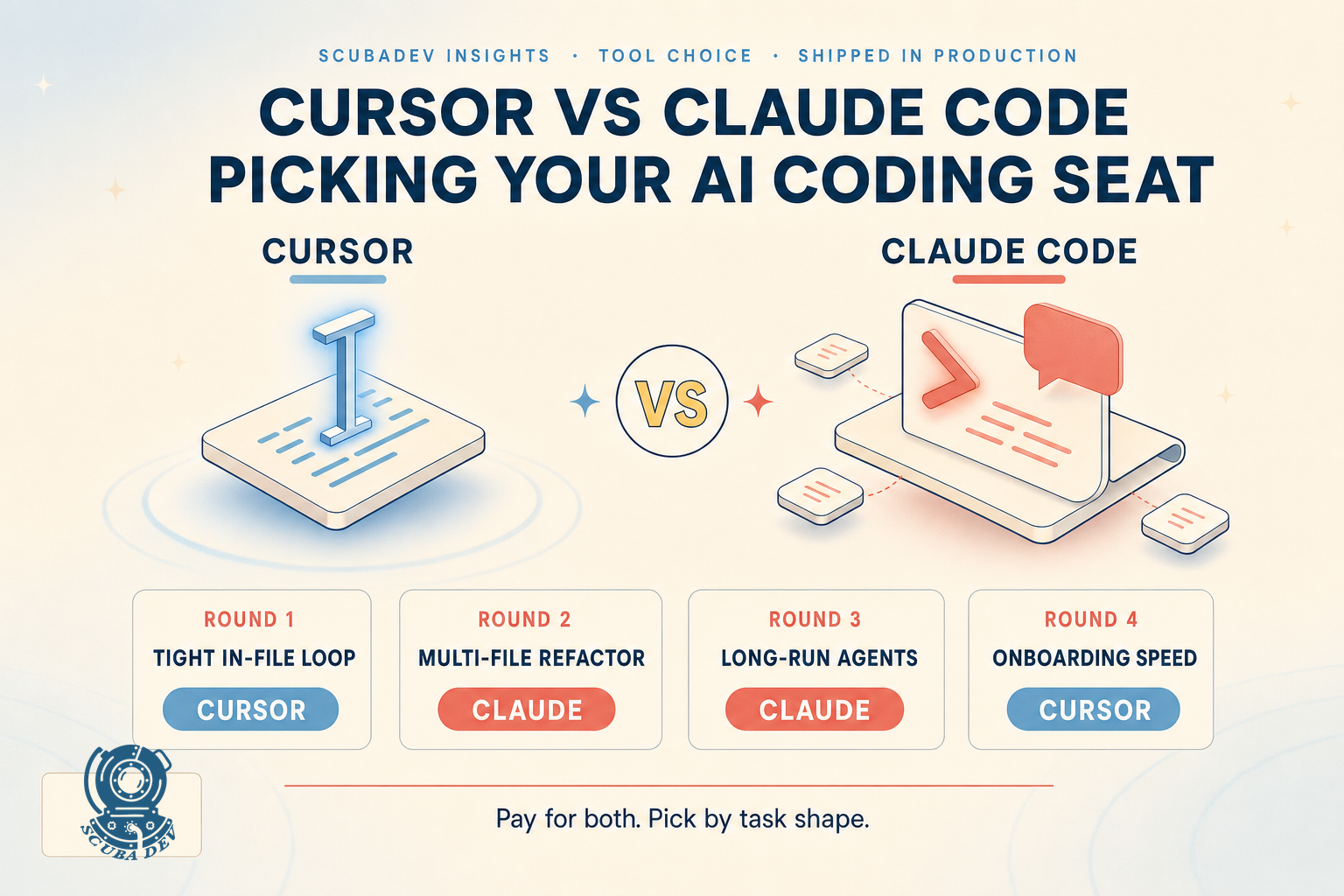

Cursor is the daily-driver IDE for tight, in-file iteration. Claude Code is the terminal-native agent for multi-file work, long-running refactors, and anything you want to hand off to a subagent pool. We pay for both. Price is close enough to be a rounding error ($20 to $40 per engineer per month each). The deciding factor is workflow: tight feedback loops go to Cursor, context-heavy work goes to Claude Code. For most teams, the right answer is not cursor vs claude code. It is cursor and claude code.

We have run both tools in production for over 600 engineer-hours across 14 client projects in 2025 and 2026. This is not a “10 features of each” list. This is what shipped, what broke, what cost, and which one we now default to by engagement type. The short version: Cursor wins on read-write round-trip speed inside a single file. Claude Code wins on multi-file refactors, long-running agents, and anything touching a CLAUDE.md. Neither is a replacement for the other, and anyone telling you otherwise has not shipped with both.

The setup: what we tested, how, and with what

Test conditions we ran both tools under.

Projects. 14 client engagements across Next.js 15 and 16, Python FastAPI, Ruby on Rails, React Native with Expo, and one Supabase Edge Functions project.

Task mix. 40 percent new feature work, 30 percent refactor, 20 percent bug fixing, 10 percent migration or infra.

Team. 6 engineers at ScubaDev plus 3 client-side engineers whose sessions we could observe.

Models. Cursor configured to prefer Claude 4.5 then 4.7 for agent tasks, GPT-5 for fast completion. Claude Code on Opus 4.7 with a Haiku fallback for cheap tasks.

Instrumentation. Internal Langfuse for prompt and token tracking. Self-reported “did this ship” and “did this break” flags per session.

Numbers in this post are aggregates across the 14 projects. When a specific number is from a single project we flag it. Numbers, not vibes.

Round 1: tight in-file iteration

Cursor wins this round cleanly. The Cmd-K inline edit and Cmd-L chat-with-selection loop is faster than Claude Code for anything under 200 lines of code. The reason is that Cursor renders proposed edits inline with a diff you accept or reject in place. Claude Code proposes edits as full file writes, which means you context-switch to review, which breaks the micro-iteration rhythm.

Typical task: rename a prop, refactor a handler, add a test for one function. Cursor averages 40 to 90 seconds round trip. Claude Code averages 90 seconds to 3 minutes for the same task, because it re-reads the file and emits a full replacement.

The winner is Cursor, full stop. If your day is mostly touching code someone else wrote last quarter, Cursor is the right default.

Round 2: multi-file refactors

Claude Code wins this round. Ask Cursor to “refactor this controller across 4 files, update the tests, and fix any imports that broke” and you get partial results. Cursor’s agent mode works, but token budget blowouts are common and the agent loses track of which files it has already touched.

Claude Code with a well-structured CLAUDE.md handles this cleanly. The pattern we use: the CLAUDE.md describes the repo conventions (file naming, test location, import style), the agent plans the refactor first, executes in parallel where safe, and reports a diff summary. Real timings from a recent retainer: a 6-file Next.js routing refactor shipped in 18 minutes with Claude Code. The same task in Cursor agent mode took 41 minutes and required 3 human corrections.

Claude Code’s advantage widens as the task scale grows. Anything over 500 lines of diff or more than 5 files, we default to Claude Code. Anything under, Cursor.

Round 3: long-running agents

Not a contest. Claude Code wins. Cursor’s agent is designed to live inside an IDE session. Claude Code is designed to run in a terminal, spawn subagents, and optionally run in the background.

We use Claude Code for: overnight migration runs, test generation passes across a whole repo, documentation sweeps, and any task where the correct answer is “spawn 6 subagents and report back in an hour.” The run-in-background flag and the notification system let a senior engineer queue a 2-hour refactor and go do something else. Cursor cannot do this.

The trade-off: Claude Code’s long-running mode requires discipline. A subagent that hallucinates for 40 minutes is a 40-minute token bill. We use a Langfuse alert on token-spend-per-session to catch runaway agents. See our context engineering post for the specific patterns.

Round 4: onboarding a new engineer

Cursor wins by a lot. The IDE is familiar (it is a VS Code fork), the shortcuts are discoverable, and a new engineer can ship their first Cursor-assisted PR inside 2 hours.

Claude Code is a terminal tool with deep configuration. A CLAUDE.md file, an mcp.json, permission rules, agent definitions. New engineers take 2 to 5 days to get comfortable. The payoff is high once they do, but the ramp is real.

If your team turnover is high or you frequently onboard contractors, weight this heavily.

Round 5: cost

Both tools cost in the same bracket. Cursor is $20 per month for Pro or $40 for Business. Claude Code billing is through the Anthropic API, which means it is per-token, which in practice runs $40 to $150 per engineer per month depending on how much multi-file work they do.

At the team level, both are rounding errors next to engineer salary. Do not pick on price. Pick on fit.

One cost wrinkle worth calling out. Claude Code’s token costs can blow out on a bad session. We have seen a single engineer burn $60 in tokens in one afternoon of messy agent runs. Setting an Anthropic API usage cap per API key is the fix. Cursor’s costs are flat and predictable.

Round 6: code review and PR quality

Draw. Both tools produce code that needs human review. Both will cheerfully introduce type errors, unused imports, and occasional hallucinated function calls.

Cursor’s advantage here is the inline diff: you see what it proposes before you accept, so the review is continuous. Claude Code’s advantage is that it can run the test suite, get the failure, and fix it in a loop without asking you. Our net: shipped-first-try PR rate is within 5 percent between the two tools. The choice does not turn on code quality.

For the honest deep-dive on Claude Code specifically, see our Claude Code review. The review of Cursor as an IDE is coming in the Cursor vs Windsurf vs Copilot 3-up.

Round 7: integration with your existing stack

Cursor integrates with: GitHub Copilot extensions, VS Code’s extension marketplace, Linear, Slack, Notion via extensions. It lives in the IDE so anything that works in VS Code works here.

Claude Code integrates with: anything you expose as an MCP server. This is a different integration model. For teams running MCP already, Claude Code’s native support is a large advantage. For teams not running MCP, it is a learning curve.

If your team already uses VS Code heavily, Cursor’s transition cost is near zero. If your team uses JetBrains IDEs, Cursor is a larger switch. Claude Code lives alongside any IDE, so it plays nicely with JetBrains users who do not want to leave their editor.

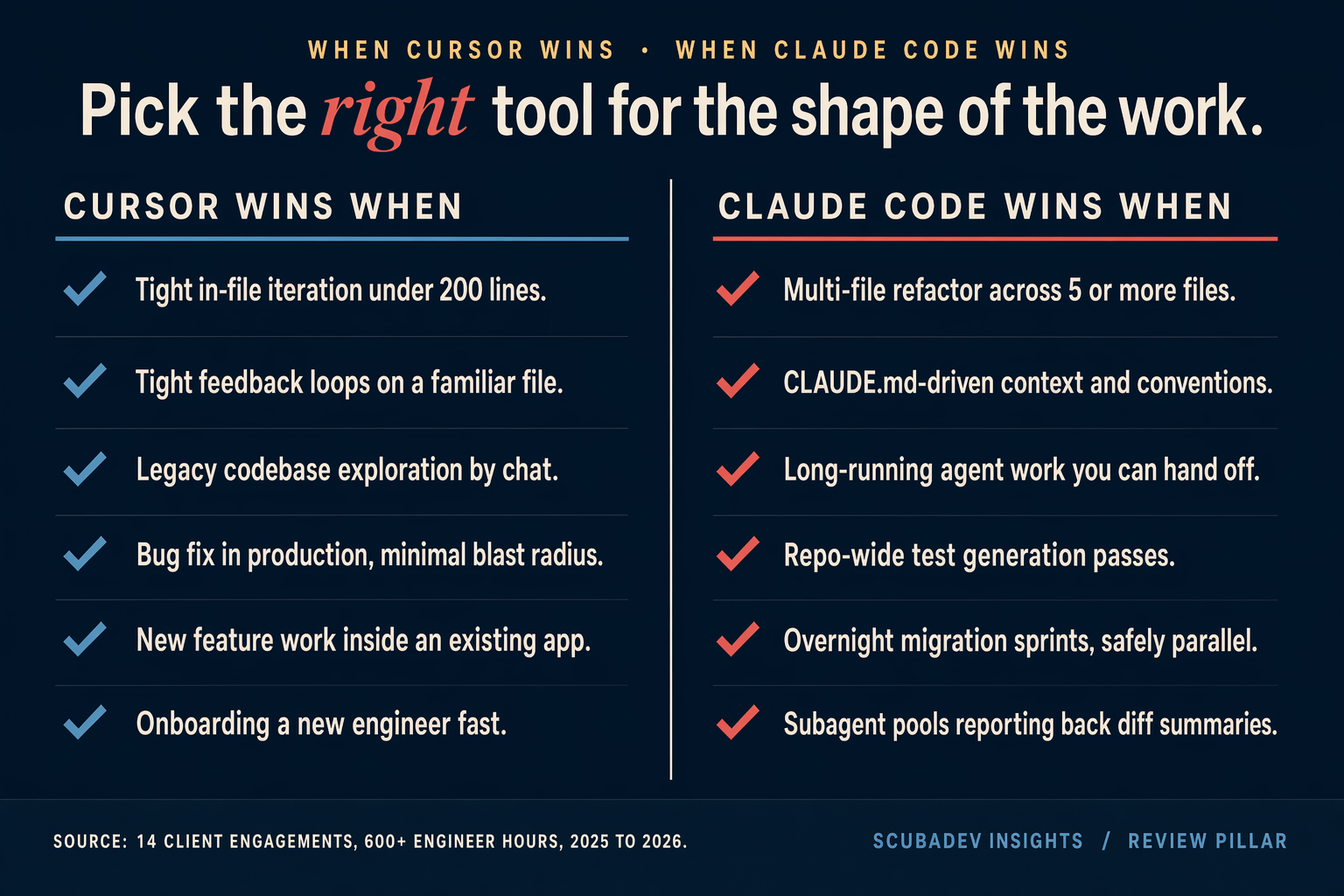

Round 8: what we default to by engagement type

This is the one that matters. What we actually pick in production.

| Engagement type | Default tool | Why |

|---|---|---|

| New feature work in an existing app | Cursor | Fast in-file loop beats context-heavy agent for adding features |

| Multi-file refactor | Claude Code | Subagent pool and multi-file diff handling |

| Test generation pass | Claude Code | Long-running, run-background, parallel safe |

| Legacy codebase exploration | Cursor | Inline chat is faster for “what does this file do” |

| Migration sprints | Claude Code | Plan first, spawn subagents, report diffs |

| Bug fix in production | Cursor | Tight feedback loop, minimal blast radius |

| New project bootstrapping | Either | Both fine for greenfield |

| Context engineering work | Claude Code | The CLAUDE.md-native tool |

The right answer for most teams is: keep both. Use Cursor as the IDE. Drop to Claude Code in a terminal pane when the task grows.

What breaks, in both tools

Honest failure modes, so this is not a puff piece.

Cursor’s weak spots. Agent mode loses track of context on refactors above 4 files. The chat history gets polluted with stale context. MCP integration is growing but not where Claude Code is. Cursor’s indexing also struggles on very large monorepos. We saw 3 second latency on a 2GB repo where Claude Code was sub-second.

Claude Code’s weak spots. Onboarding curve is real. Token cost variance is real. “Accept all changes” is a dangerous pattern because Claude Code happily overwrites files you did not mean to touch. The system’s Bash tool will sometimes attempt destructive commands without clear confirmation, which means you need a permissions config and a sandbox. The fix is discipline, not a tool change.

Both tools occasionally produce code that looks correct and is wrong. The Stack Overflow 2025 survey found 66 percent of developers cite “almost right, but not quite” AI code as the top frustration. That has not been solved by either of these tools. Human review is still required.

What we run at ScubaDev

We pay for both. Every senior engineer has a Cursor Business seat and Claude Code API access with a per-engineer token cap. The default flow is:

- Open the codebase in Cursor for exploration and first pass.

- When the task grows beyond 2 files or the refactor is structural, drop to Claude Code in a terminal pane.

- Commit, push, open PR, let human review.

Our Opus 4.7 Guidebook lays out the full Claude Code configuration, agent definitions, and prompt patterns we use in production. The short version of why we pay for both: the tools are complementary, the combined cost is under 1 percent of engineer loaded cost, and picking only one is leaving velocity on the table.

FAQ

Better for in-IDE work and onboarding. Worse for long-running agents and multi-file refactors. Neither is strictly better.

No. You can 2 to 3x the output of a senior engineer. You cannot replace the human review, the architecture judgment, or the product decisions. If anyone tells you otherwise they are selling a service that will fail in 6 months.

Yes, select Claude 4.7 (Opus) in the model picker. Anthropic’s model routing works inside Cursor.

For a serious dev team, yes. For a solo founder vibe-coding an MVP, Cursor alone is enough until the codebase passes 20 files or 5000 lines.

Covered in our Cursor vs Windsurf vs Copilot 3-up. Short version: Copilot is behind on agent work. Windsurf is closer to Cursor.

Set a per-key usage cap in the Anthropic console. Use the Haiku model for cheap tasks. Scope agent permissions tightly. The full patterns are in our context engineering handbook.